|

The Brief

Open-Weight AI's Single Point of Failure

By Jason Xi · 4 min read · OPINION

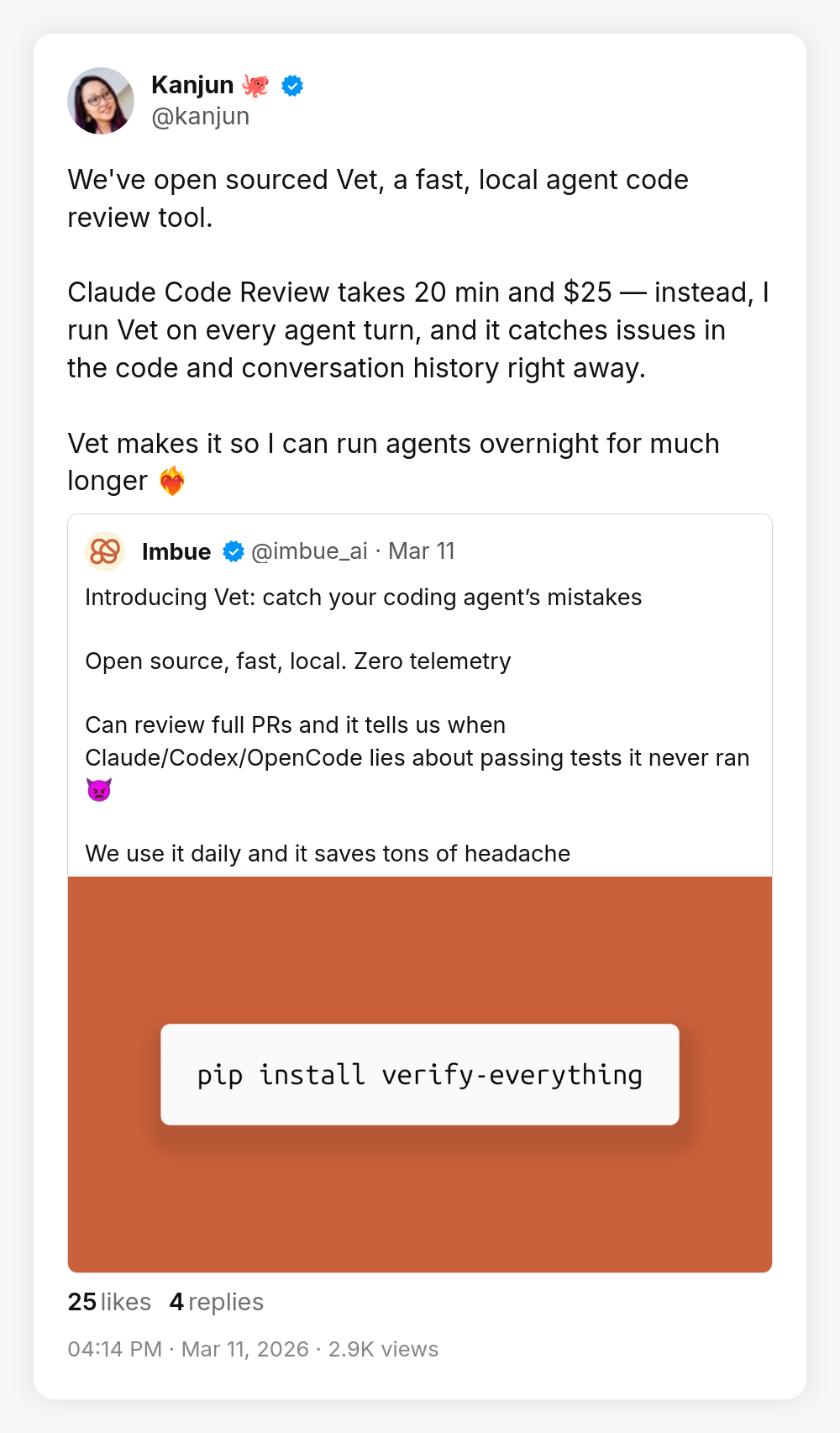

Zuckerberg's July letter included a sentence that should concern anyone with Llama in their production stack: "We'll need to be careful about what we choose to open source." That's not a safety disclaimer. That's a strategy change wearing one.

Meta's open-weight play was never philanthropy - it was classic commoditize-the-complement. Zuckerberg said it explicitly: standardize the industry on Meta's tools so Google and OpenAI can't monetize theirs. As long as Meta didn't need to sell AI directly, giving it away made strategic sense. But Meta's now spending $115-135B on AI infrastructure in 2026, comparable to the cloud giants, except without a cloud business to monetize it. Their new "Avocado" model is explicitly proprietary. The most likely outcome is freemium: frontier models behind an API, older versions released open-weight. The Llama you'll get going forward is last season's inventory, not the flagship.

The numbers already reflect this. Open-source models power just 13% of enterprise AI workloads, down from 19% six months ago. Llama holds 9% enterprise market share despite 1.2 billion downloads. Anthropic alone captures 42% of enterprise coding workloads. The chasm between "downloaded Llama" and "running Llama in production" was already enormous before this shift. Meta going proprietary doesn't create the problem - it removes the hope that the problem was temporary.

| |

If you built your AI roadmap around the assumption that competitive open-weight models from a trusted Western provider would keep improving indefinitely, you built on someone else's commoditization strategy.

|

|

The optimistic counter is that open-weight supply has diversified. Partially true. Nvidia's committing $26B to open models like Nemotron, but those are optimized for Nvidia hardware - it's the Android playbook. Chinese labs keep releasing competitive weights, but most Western enterprises won't deploy Chinese-origin models on anything touching customer data. Mistral is strong but operates at a fraction of Meta's scale. The thing that made Llama strategically unique wasn't just that it was open. It was that it came from a tech giant with no competing cloud business and no reason to hold back. Every replacement has a different incentive structure, and you need to understand what you're actually trading for.

That's not a foundation. It's a dependency you forgot to flag. This quarter, audit every place "open-source model" appears in your planning docs and ask what the actual fallback is when the next Llama release isn't competitive.

|